The recently formed Ada Initiative, a non-profit organization dedicated to increasing participation of women in open technology and culture, has been soliciting responses for their inaugural activity, a census of women in open technology and culture. The name is a little misleading as it is open for anyone to respond to, irrespective of gender, the idea being to get a feel of the communities perception of womens participation in this area of society. It’s very quick to take and I would encourage everyone who is involved in this area to participate.

Category Archives: Computers

NUMA, memory binding and how swap can dilute your locality

On the hwloc-devel mailing list Jeff Sqyres from Cisco posted a message saying:

Someone just made a fairly disturbing statement to me in an Open MPI bug ticket: if you bind some memory to a particular NUMA node, and that memory later gets paged out, then it loses its memory binding information — meaning that it can effectively get paged back in at any physical location. Possibly even on a different NUMA node.

Now this sparked off an interesting thread on the issue, with the best explanation for it being provided by David Singleton from ANU/NCI:

Unless it has changed very recently, Linux swapin_readahead is the main culprit in messing with NUMA locality on that platform. Faulting a single page causes 8 or 16 or whatever contiguous pages to be read from swap. An arbitrary contiguous range of pages in swap may not even come from the same process far less the same NUMA node. My understanding is that since there is no NUMA info with the swap entry, the only policy that can be applied to is that of the faulting vma in the faulting process. The faulted page will have the desired NUMA placement but possibly not the rest. So swapping mixes different process’ NUMA policies leading to a “NUMA diffusion process”.

So when your page gets swapped back in it will drag in a heap of pages that may have nothing to do with it and hence their pages may be misplaced. Or worse still, your page could be one of the bunch dragged back in when a process on a different NUMA node swaps something back in. This will dilute the locality of the pages. Not fun!

How computers fail to entice people into being programmers

An old friend of mine from the UK, Steve Usher, has pretty much nailed things with this blog on “Enthusing teen minds: Why today’s computers won’t create tomorrow’s programmers“. He says:

The computers of the early 80s were a blank canvas. You plugged them in, switched them on and (hopefully) the input cursor blinked at you. There was no decoration, no clutter and it was something waiting for YOU to do something to it.

Ah yes, I remember those days, when 3.5KB was a lot of memory! But what about today’s computers ?

They’re immediately brimming full of functionality all vying for your attention, but it’s also incredibly locked down. You can do absolutely anything… ANYTHING as long as it’s what the visionary who steered the programming teams thinks that you should want to do. Woe betide you if you want to do anything different. It’ll either ignore you or give you an unhelpful suggestion in a dialog box. You can be creative, but only in the ways you’re told you can be.

But before us free software types get all puffy and “I told you so”, he points out that things aren’t that much better on our systems with all our SDK’s, IDE’s, toolkits, compilers and interpreters:

It’s like taking a 5 year old into an engineering workshop, sitting him down and then complaining when he doesn’t build a car as he had all the tools available to him to do it and hence it must be his fault.

I’m not as sure that we need to build something new from scratch though, I think it might be more the case that what we need to do is to sort through all the various projects that could fit what he is after and build a distro (of whatever OS) that boots up straight into that application and lets them play with it. Perhaps something like SDLbasic (a BASIC interpreter for game development) might be a good start ?

Why Creative Commons Rocks

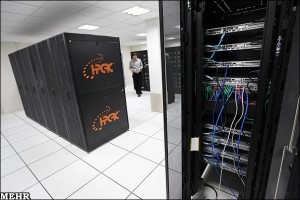

Iran’s New GPU Powered Supercomputer(s) ? (Updated x 2)

ComputerWorld has an article about Iran claiming to have two new supercomputers, fairly modest by Top500 standards, but lament the lack of details:

But Iran’s latest supercomputer announcement appears to have no details about the components used to build the systems. Iranian officials have not yet responded to request for details about them.

However, looking at the Iranian photo spread that they link to (which appears to be slashdot’ed now) the boxes in question are SuperMicro based systems (and so could be sourced from just about anywhere), with some of their 2U storage based boxes with heaps of disk and both 2U and some 1U boxes which are presumably the compute nodes. The odd thing is that they’re spaced out quite a bit in the rack, and the 1U systems have two fans on the left hand side (which indicates something unusual about the layout of the box). Here’s an image from that Iranian news story:

The nice thing is that it’s pretty easy to find a slew of boxes on SuperMicro’s website that matches the picture, it’s their 1U GPU node range which are dual GPU beasts, for example:

The problem is that this range goes back to a few years, for example in an nVidia presentation on “the worlds fastest 1U server” from 2009. HPC-wire describe these original nodes as:

Inside the SS6016T-GF Supermicro box, the two M1060 GPUs modules are on opposite sides of the server chassis in a mirror image configuration, where one is facing up, the other facing down, allowing the heat to be distributed more evenly. The NVIDIA M1060 part uses a passive heat sink, and is cooled in conjunction with the rest of the server, which contains a total of eight counter-rotating fans. Supermicro also builds a variant of this model, in which it uses a Tesla C1060 card in place of the M1060. The C1060 has the same technical specs as the M1060, the principle difference being that the C1060 has an active fan heat sink of its own. In both instances though, the servers require plenty of juice. Supermicro uses a 1,400 watt power supply to drive these CPU-GPU hybrids.

According to the HPC-Wire article the whole system (2 x CPUs, 2 x GPUs) is rated at about 2TF for single-precision FP. nVidia rate the M1060 card at 933 GF (SP) and 78 GF (DP) so I’d reckon for DP FP you’re looking at maybe 180 GF per node. But now that range includes ones with the newer M2070 Fermi GPUs which can do 1030 GF (SP) and 515 GF (DP) and would get you up to just over 2TF SP and (more importantly for Top500 rankings) over 1TF DP per node.

Now if we assume the claimed 89TF for the larger system is correct, that it is indeed double precision (to be valid for the Top500), they measured it with HPL and assume an efficiency of about 0.5 (which seems about what a top ranked GPU cluster achieves with Infiniband) we can play some number games. Numbers below invalidated by Jeff Johnson’s observation, see the “updated” section for more!

If we assume these are older M1060 GPUs then you are looking at something in the order of 1000 compute nodes to be able to get that number Rmax in Linpack – something of the order of 1MW. From the photos though I didn’t get the sense that it was that large, the way they spaced them out you’d need maybe 200 racks for the whole thing and that would have made an impressive photo (plus an awful lot of switches). Now if they’ve managed to get their hands on a the newer M2070 based nodes then you could be looking at maybe 200 nodes, a more reasonable 280KW and maybe 40 racks. But I still didn’t get the sense that the datacentre was that crowded…

So I’d guess that instead of actually running Linpack on the system they’ve just totted up the Rpeak’s then you would get away with 90 of them, so maybe 15 or 20 racks which feels to me more like the scale depicted in the images. That would still give them a system that would hit about 40TF and give it a respectable ranking around 250 on the Top500 IF they used Infiniband to connect it up. If it was gigabit ethernet then you’d be looking at maybe another 50% hit to its Rmax and that would drop it out of the Top500 altogether as you’d need at least 31TF to qualify in last Novembers list.

It’ll be certainly interesting to see what the system is if/when any more info emerges!

Update In the comments Jeff Johnson has pointed out the 2U boxes are actually 4-in-2U dual socket nodes, i.e. will likely have either 32 or 48 cores depending on whether they contain quad core or six core chips. You can see that best from this rear shot of a rack:

There are mostly 8 of those units in a rack (though rack at one end of the block has just 7, but there are 2 extra in a central rack below what may be an IB switch), so that’s 256 cores a rack if they’re quad core. There are 8 racks in a block and two blocks to a row so we’ve got 4,096 cores in that one row – or 6,144 if they’re 6 core chips!

The row with the GPU nodes is harder to make out we cannot see the whole row from any combination of the photos, but in the front view we can see 2 populated racks of a block of 5, with 8 to a rack. The first rear view shows that the block next to it also has at least 2 racks populated with 8 GPU nodes. The second rear view is handy because whilst it shows the same racks as the first it demonstrates that these two blocks coincide with a single block of traditional nodes, raising the possibility of another pair of blocks to pair with the other half of the row of traditional nodes.

Assuming M2070 GPUs then you’re looking at 8TF a rack, or 32TF for the row (assuming no other racks populated outside of our view). If the visible nodes are duplicated on the other side then you’re looking at 64TF.

If we assume that the Intel nodes have quad core Nehalem-EP 2.93Ghz then that would compare nicely to a Dell system at Saudi Aramco which is rated at an Rpeak of 48TF. Adding the 32TF for the visible GPU nodes gets us up to 80TF, which is close to their reported number, but still short (and still for Rpeak, not Rmax). So it’s likely that there are more GPU nodes, either in the 4 racks we cannot see into in the visible block of GPU racks or in another part of that row – or both! That would make a real life Top500 run of 89TF feasible, with Infiniband.

Be great to have a floor plan and parts list! 🙂

Update 2

Going through the Iranian news reports they say that the two computers are located at the Amirkabir University of Technology (AUT) in Tehran, and Esfahan University of Technology (in Esfahan). The one in Tehran is the smaller of the two, but the figures they give appear, umm, unreliable. It seems like the usual journalists-not-understanding-tech issue compounded by translation issues – so for instance you get things like:

The supercomputer of Amirkabir University of Technology with graphic power of 22000 billion operations per second is able to process 34000 billion operations per second with the speed of 40GB. […] The supercomputer manufactured in Isfahan University of Technology has calculation ability of 34000 billion operations per second and its Graphics Processing Units (GPU) are able to do more than 32 billion operations per 100 seconds.

and..

Amirkabir University’s supercomputer has a power of 34,000 billion operations per second, and a speed of 40 gigahertz. […] The other supercomputer project carried out by Esfahan University of Technology is among the world’s top 500 supercomputers.

So is the AUT (Tehran) system 22TF or 34TF? Could it be Rpeak 34TF and Rmax 22TF ? Is the Esfahan one 34TF (which would just creep onto the Top500) or higher ?

Unfortunately it’s the Tehran system in the photos, not the Esfahan one (the give away is the HPCRC on the racks). So my estimate of 80TF Rpeak for it could well give a measured 32TF if it’s using ethernet as the interconnect (or if the CPUs are a slower clock in the 2U nodes). Or perhaps the GPU nodes are part of something else ? That and slower clocked CPUs could bring the Rpeak down to 34TF..

Need more data!

WordPress Upgraded to 3.1

OK, just done an svn switch http://svn.automattic.com/wordpress/tags/3.1 to upgrade my blog to WordPress 3.1, and nothing looks too broken so far. 😉

If you spot any problems do let me know, either by a comment here or by email to chris at this domain name (csamuel.org).

Testing the N900 “Lowlight” Photo Application

Nokia have released some “research prototypes” of applications that use the FCam libraries to do fun things with taking photos on their Nokia N900. One of these is called “Lowlight” and is designed to make it easier to get reasonable photos in, well, low light conditions. I tested this out on Tuesday night after the Linux Users of Victoria meeting, taking a photo of the Old Commerce Building on the University of Melbourne campus.

Now given that’s taken hand-held without a flash, I’m pretty impressed, you can even make out the design of the courtyard floor in front of it! If you go and look at the original full size version on Flickr (CC-BY licensed) you can see there is noise around the outside of the building, and there is an almost oil-paint effect on the details of the carvings on the building due to their algorithm, but given the alternative was nothing at all it’s a great little program!

PPP Dialup’s for People in Egypt #Egypt #Jan25 – Updated x 6

I’ve noticed two PPP dialups being published for people in Egypt who are struggling to get online due to the cutting of many Internet links into the country.

They are this one seen on a blog on the Internet blackout:

by Anon on January 28th, 2011 10:30 pm “free†PPP dial-up access on Madrid, Spain: +34 912910230 user:internetforegypt@trovator.com pass:internetforegypt We are Legion.

and this seen on Michael Moore’s twitter feed:

People of Egypt! Use this dial-up provided by friends in France 2 go online: +33172890150 (login 'toto' password 'toto') #egypt #jan25

Update #1 – a massive list of dialups listed here – http://werebuild.eu/wiki/Egypt/Main_Page#Internet_Access

Update #2 – instructions on dialup and more numbers are here – http://manalaa.net/dialup

Update #3 – seen on the telecomix Twitter feed:

One more modem dialup account on Swedish number for #Egypt user/pass tcx/tcx +46850009990 #Jan25 #Jan26

Update #4 – Again via the telecomix Twitter feed:

Another modem dialup number (unconfirmed if works) +46187000800, user: flashback pass: flashback #Egypt #Jan25 #Jan26

Update #5 – Not dialup related, but SMS info from the telecomix Twitter feed:

Vodafone users in Egypt: change your Message Ctr to +20105996713 able to send SMS pls spread #Egypt #Jan25 #Jan26

Update #6 – another dialup number via telecomix feed:

Anonymous dialup service provided by #FDN on +33172890150 login/pasword toto/toto #JAN25 #Egypte |Please RT

If you know of others please leave a comment here with the details!

VLSCI: Systems Administrator – High Performance Computing, Storage & Infrastructure

/*

* Please note: enquiry and application information via URL below, no agencies please!

*

* Must be Australian permanent resident or citizen.

*/

Executive summary

Want to work with hundreds of TB of storage, HPC clusters and a Blue Gene supercomputer and have an aptitude for handling storage and data ?

http://jobs.unimelb.edu.au/jobDetails.asp?sJobIDs=715542

Background

VLSCI currently has in production as stage 1:

- 2048 node, 8192 core IBM Blue Gene/P supercomputer

- 80 node, 640 core IBM iDataplex cluster (Intel Nehalem CPUs)

- ~300TB usable of DDN based IBM GPFS storage plus tape libraries

- 136 node, 1088 core SGI Altix XE cluster (Intel Nehalem CPUs)

- ~110TB usable of Panasas storage

There is a refresh to a much larger HPC installation planned for 2012.

Both Intel clusters are CentOS/RHEL 5, the front end and service nodes for the Blue Gene are SuSE SLES 10. The GPFS servers are RHEL5. Panasas runs FreeBSD under the covers.

Job advert

http://jobs.unimelb.edu.au/jobDetails.asp?sJobIDs=715542

SYSTEMS ADMINISTRATOR, STORAGE & INFRASTRUCTURE

Position no.: 0022139

Employment type: Full-time Fixed Term

Campus: Parkville

Close date: February 3rd, 2011

Victorian Life Sciences Computation Initiative, Melbourne Research

Salary: HEW 7: $69,608 – $75,350 p.a. or HEW 8: $78,313 – $84,765 p.a. plus 17% superannuation.

The Victorian Life Sciences Computation Initiative (VLSCI) is a Victorian Government project hosted at The University of Melbourne which aims to establish a world class Life Sciences Compute Facility for Victorian researchers. The Facility operates a number of supercomputers and storage systems dedicated to High Performance Computing. The VLSCI wishes to recruit a Linux Systems Administrator with knowledge of file systems and an interest in working with technologies such as GPFS, TSM, HSM, NFS.

This position is an opportunity to become involved in leading science and computing fields and work as part of a small but self-contained team. Expect to find yourself learning new skills and developing new and innovative solutions to problems that have not yet been identified. You have every opportunity to make a real difference and will need to contribute to a high level of service and creativity.

More Details

Selection criteria and more details are in the Position Description (PDF) here:

Apologies for the URL shortener, the original URL is a horribly long one.. 🙁

Volunteers for Flood Relief Sought at LCA2011 in Brisbane

Pia Waugh has put up a page on the LCA wiki seeking to recruit and organise attendees who might want to help assist with the flood recovery in Brisbane. It says:

This page was set up for linux.conf.au attendees who are keen to do some volunteer work to assist the flood victims in Brisbane. A few hundred attendees throwing in a few hours or a day or so to help out will make an enormous difference to people who have lost so much. […] Specific volunteer activities will be planned for the Sunday before the conference and the Saturday following the conference, and there will no doubt also be odd jobs here or there for those who find themselves with a free hour or so throughout the conference.

The page asks volunteers to list themselves there but currently they only have a couple of people on the sheet and I think they could do with some more publicity!

Unfortunately I don’t get to go to linux.conf.au these days (SC in the US is my one work conf now) otherwise I’d be pitching in too.. 🙁